Counter type A/D converter is one of the advance entry label ADC. As we discussed on my early post that working principle of ADC and simultaneous or flash type ADC. Now in this post we look on working principle of counter type A/D converter.

Why we use counter type a/d converter?

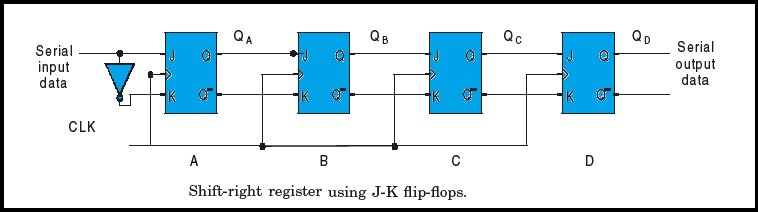

In case of simultaneous or flash type ADC we now the requirement of comparator for n bit ADC is 2n-1. To avoid this huge requirement of comparator, it is possible to construct higher-resolution A/D converters. And it can build with a single comparator by using a variable reference voltage. One such A/D converter is the counter type A/D converter represented by the block schematic at bellow.

Working Process

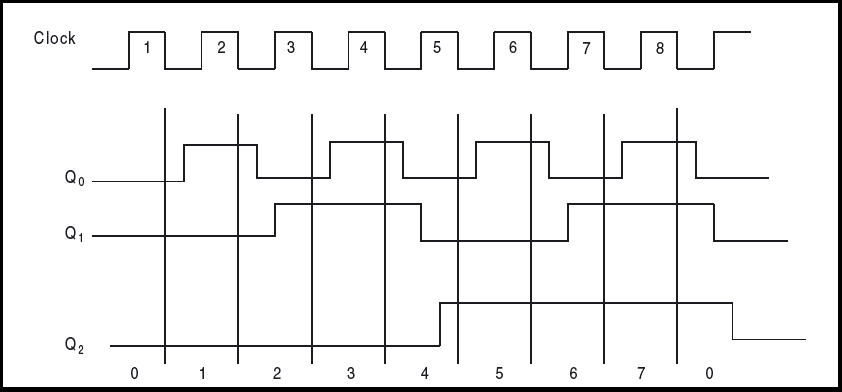

Now we have to see how this above circuit works.The circuit functions as follows. Firstly, to begin with, the counter is reset to all 0s. Secondly, When a convert signal appears on the start line, the input gate is enabled and the clock pulses are applied to the clock input of the counter. The counter advances through its normal binary count sequence. Thirdly, the counter output feeds a D/A converter and the staircase waveform generated at the output of the D/A converter forms one of the inputs of the comparator. The other input to the comparator is the analogue input signal. Fourthly, Whenever the D/A converter output exceeds the analogue input voltage, the comparator changes state. Finally, the gate is disabled and the counter stops. The counter output at that instant of time is then the required digital output corresponding to the analogue input signal.

Advantage

The counter type A/D converter provides a very good method for digitizing to a high resolution. This method is much simpler than the simultaneous method for higher-resolution A/D converters.

Disadvantage

The drawback with this converter is that the required conversion time is longer. Since the counter always begins from the all 0s position and counts through its normal binary sequence, it may require as many as 2n counts before conversion is complete. Now if we look on the average conversion time, then it can be taken to be 2n/2 = 2n−1 counts. One clock cycle gives one count.

Time Calculation

Now take an example to understand the mater better way, if we have a four-bit converter and a 1 MHz clock, the average conversion time would be 2n-1 =8 us. It would be as large as 0.5 ms for a 10-bit converter of this type at a 1 MHz clock rate. In fact, the conversion time doubles for each bit added to the converter. Thus, the resolution can be improved only at the cost of a longer conversion time. This makes the counter-type A/D converter unsuitable for digitizing rapidly changing analogue signals.

Let’s check how you learn “counter type a/d converter” with a simple quiz.

0 of 5 questions completed Questions: Analogue to Digital Converter You have already completed the quiz before. Hence you can not start it again. Quiz is loading... You must sign in or sign up to start the quiz. You have to finish following quiz, to start this quiz: 0 of 5 questions answered correctly Time has elapsed You have reached 0 of 0 points, (0) The time taken by the ADC from the active edge of SOC(start of conversion) pulse till the The popular technique that is used in the integration of ADC chips is Which is the ADC among the following? The number of inputs that can be connected at a time to an ADC that is integrated with The conversion delay in successive approximation of an ADC 0808/0809 isADC

Quiz-summary

Information

Results

Average score Your score Categories

1. Question

active edge of EOC(end of conversion) signal is called 2. Question

3. Question

4. Question

successive approximation is 5. Question