It is very important question in digital electronics that How Digital to Analogue Converters (DAC) works? In our real world most of signals are analogue continues in nature. But if we want to process it further, we need to convert these analogue signals in digital signals. Finally after processing again we have to convert it in analogue form using Digital to Analogue Converters. Because data only be processed by digitally.

How Digital to Analogue Converters (DAC) works?

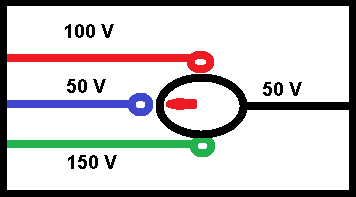

The input of a Digital to Analogue Converters (D/A) is an n-bit binary signal, available in parallel form. Normally, digital signals are available at the output of latches or registers and the voltages correspond to logic 0 and logic 1. We should know that the logic levels (0 or 1) do not have precisely fixed voltages. Therefore, these voltages are applied directly to the converter for digital-to-analogue computation. But they used to operate digitally controlled switches. The switch mainly operated to one of the two positions depending upon the digital signal logic levels (logic 0 or logic 1). Which connects precisely fixed voltages or voltage references V(1) or V(0) to the converter input, corresponding to logic 1 and logic 0 respectively.

Working formula

So here we come with working formula of Digital to Analogue converter ( DAC). The analogue output voltage Vo of an n-bit straight binary D/A converter can be related to the digital input by the equation

Vo = K (2n-1 . bn–1 + 2n–2 . bn–2 + 2n–3 . bn–3 + ………+ 22. b2 + 2. b1 + b0).

Where K = proportionality factor equivalent to step size in voltage,

bn = 1, if the nth bit of the digital input is 1,

= 0, if the nth bit of the digital input is 0.

Types of DAC

As we know that there are two types of commonly used D/A converters as mentioned below.

- Weighted-resistor D/A converter, and

- R-2R ladder D/A converter.

Specification

So time for check some specification of Digital to Analogue Converters we should know when we use it those are resolution, accuracy, conversion speed, dynamic range, nonlinearity (NL) and differential nonlinearity (DNL) and monotonocity .

Resolution

The resolution of a D/A converter is the number of states (2n) into which the full-scale range is divided or resolved. Here, n is the number of bits in the input digital word. And we should remember that the higher the number of bits, the better is the resolution. An eight-bit D/A converter have 255 resolvable levels. It said to have a percentage resolution of (1/255)×100=0.39% or simply an eight-bit resolution.

Accuracy

The accuracy of a D/A converter is the difference between the actual analogue output and the ideal expected output when a given digital input is applied. Sources of error include the gain error (or full-scale error), the offset error (or zero-scale error), nonlinearity errors and a drift of all these factors.

Conversion Speed or Settling Time

The conversion speed of a D/A converter can expressed in terms of its settling time. The settling time is the time period that has elapsed for the analogue output to reach its final value within a specified error band after a digital input code change has been effected. General-purpose D/A converters have a settling time of several microseconds, while some of the high-speed D/A converters have a settling time of a few nanoseconds. The settling time specification for D/A converter type number AD 9768 from Analogue Devices USA, for instance, is 5 ns.

Dynamic Range

This is the ratio of the largest output to the smallest output, excluding zero, expressed in dB. For linear D/A converters it is 20×log2n, which is approximately equal to 6n. While companding-type D/A converters which we see discussion in Section 12.3, it is typically 66 or 72 dB.

Nonlinearity and Differential Nonlinearity

Nonlinearity (NL) is the maximum deviation of analogue output voltage from a straight line drawn between the end points. And it expressed as a percentage of the full-scale range or in terms of LSBs.

Differential nonlinearity (DNL) is the worst-case deviation of any adjacent analogue outputs from the ideal one-LSB step size.

Monotonocity

In an ideal D/A converter, the analogue output should increase by an identical step size for every one-LSB increment in the digital input word. When the input of such a converter is fed from the output of a counter, the converter output will be a perfect staircase waveform. In such cases, the converter said to be exhibiting perfect monotonocity. A D/A converter considered as monotonic if its analogue output either increases or remains the same but does not decrease as the digital input code advances in one-LSB steps. If the DNL error of the converter is less than or equal to twice its worst-case nonlinearity error, it guarantees monotonocity.

Lets check how you learn digital to analogue converter ?

0 of 5 questions completed Questions: Digital to Analogue Converter You have already completed the quiz before. Hence you can not start it again. Quiz is loading... You must sign in or sign up to start the quiz. You have to finish following quiz, to start this quiz: 0 of 5 questions answered correctly Time has elapsed You have reached 0 of 0 points, (0) In binary resistor DAC, which terminal of the opa-mp is grounded? In binary resistor DAC, Ri and Ro are related as 3. In binary resistor DAC, the tolerance of Rn Binary resistor DAC is mostly used when the no. of bits n is 6. In R-2R Ladder DAC, the scale factor K isDAC

Quiz-summary

Information

Results

Average score Your score Categories

1. Question

2. Question

3. Question

4. Question

5. Question